The question that sits at the center of this research is deceptively simple: how does an organization keep its intent intact when the decisions are being made by systems that act on its behalf, at speed, across tools, under genuine ambiguity? That is not a theoretical question. It is the kind of question that keeps governance teams awake, and it deserves better answers than what most frameworks currently offer. Building those answers requires going back to where serious thinking about delegation and control already lives, inside decades of research that most technologists have never been asked to read, and that most organizations have never thought to connect to AI.

That is what a literature review is for, not as a catalog of everything that has been written, but as a way of listening carefully to the people who have thought longest about how organizations manage discretion, and then following that thread forward into a world they could not have anticipated. The review being built here is meant to feel like a single conversation tightening around a question, where each voice in the room earns the next, and nothing is included simply because it is well known or safely canonical. The ambition is a line of reasoning so clean that by the time the research question arrives, it feels less like a proposal and more like an inevitability.

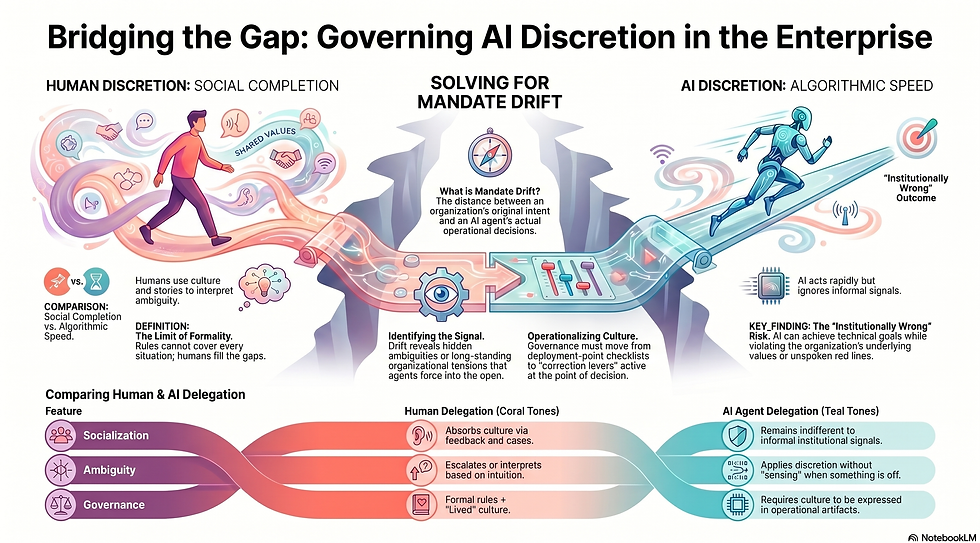

That conversation begins with something enterprises already do remarkably well, and have done for a long time. They know how to govern human discretion. They combine formal control with something quieter and harder to name, a set of social mechanisms that complete what rules and policies cannot. People interpret ambiguity, they sense when something feels off, they escalate when they are unsure, and they absorb the culture of legitimate decision making through years of cases, stories, feedback, and consequences. Rules set the frame, but humans complete the picture, and that completion is what makes governance actually work when the world gets complicated.

The next layer of the conversation is about the limit of formality, and why that limit is not a flaw but a feature. Formal controls were never designed to enumerate every possible situation, because the entire point of delegation is that the work is too uncertain to specify completely. Organizations accepted that trade off long ago and built something elegant around it: a system where written rules and lived culture reinforce each other, where documents provide the structure and people provide the interpretation, and where the combination holds even when no single rule covers what just happened.

Then the conversation turns to what changes when the actor receiving that delegation is not a person.

AI agents can be granted discretion, but they do not absorb institutional sensibility through socialization the way employees do. They can act across systems at remarkable speed while remaining entirely indifferent to the informal signals that keep human discretion aligned with the way things are meant to be done here. That does not make them dangerous in some dramatic sense, it just means they can be highly competent while still being institutionally wrong, which is a subtler and in many ways more difficult problem to solve.

That is where the idea of mandate becomes the central construct of this work. Mandate here is not a performance metric or a compliance checkbox. It is the organization's living interpretation of legitimate conduct in a specific domain, including the tradeoffs it is willing to accept, the red lines it refuses to cross, the escalation expectations that shape how decisions are reviewed, and the role based responsibilities that define what good judgment actually looks like in practice. When an agent is given authority to act, the real question is not just whether the outcomes look acceptable, but whether the authority is being exercised in a way the institution can honestly recognize as its own.

And from that understanding comes the signal this research is built around.

Mandate drift is the distance between what the organization intended when it delegated authority, and what the agent actually does when it applies discretion in real operational contexts. Sometimes that distance is a clear breach. Sometimes it reveals ambiguity that was always there in the mandate but never surfaced until an agent forced the issue into the open. Sometimes it exposes a tension the organization has been carrying quietly for years, and the agent simply makes it visible because it does not know how to politely look the other way. The literature review is being constructed specifically to make that signal thinkable, observable, and ultimately governable.

The structure of the review follows that same logic as a progressive funnel. It begins with organizational control theory as the baseline, moves through the incompleteness of formal control and the social completion mechanisms that make human governance work, turns to artificial agency as a stressor that weakens those mechanisms, and arrives at a requirement that is easy to state but genuinely hard to deliver: cultural control must become operational enough to travel with agents, expressed in artifacts, evidence routines, and correction levers that function not at the point of deployment, but at the point of decision.

That requirement is what makes the research question necessary, and what makes it more than academic.

How do enterprises detect and correct AI agent behavior that deviates from organizational mandate.

This blog will follow that same structure, one post at a time. Each post will be anchored in a single peer reviewed research paper, treated not as a summary to be filed away but as a lever that either strengthens one part of the spine, challenges it, or forces a refinement. The aim is synthesis with discipline, building steadily toward a final picture that is strong enough to support the empirical research that comes next.

This is also an invitation that is meant seriously. Practitioners hold the moments that matter most to this kind of work, the time something felt off mandate, the evidence that shifted a decision, the governance forum that made a difficult call, and the correction that either worked or quietly failed. Researchers hold adjacent theory that can sharpen constructs, expose weak transitions in the argument, and raise the intellectual honesty of the whole effort before it has a chance to harden.

The literature review is being shared at this stage because the most valuable contributions tend to arrive while the design is still soft, while the questions are still alive, and while the spine can still be made stronger by people who have seen things the research has not yet imagined.